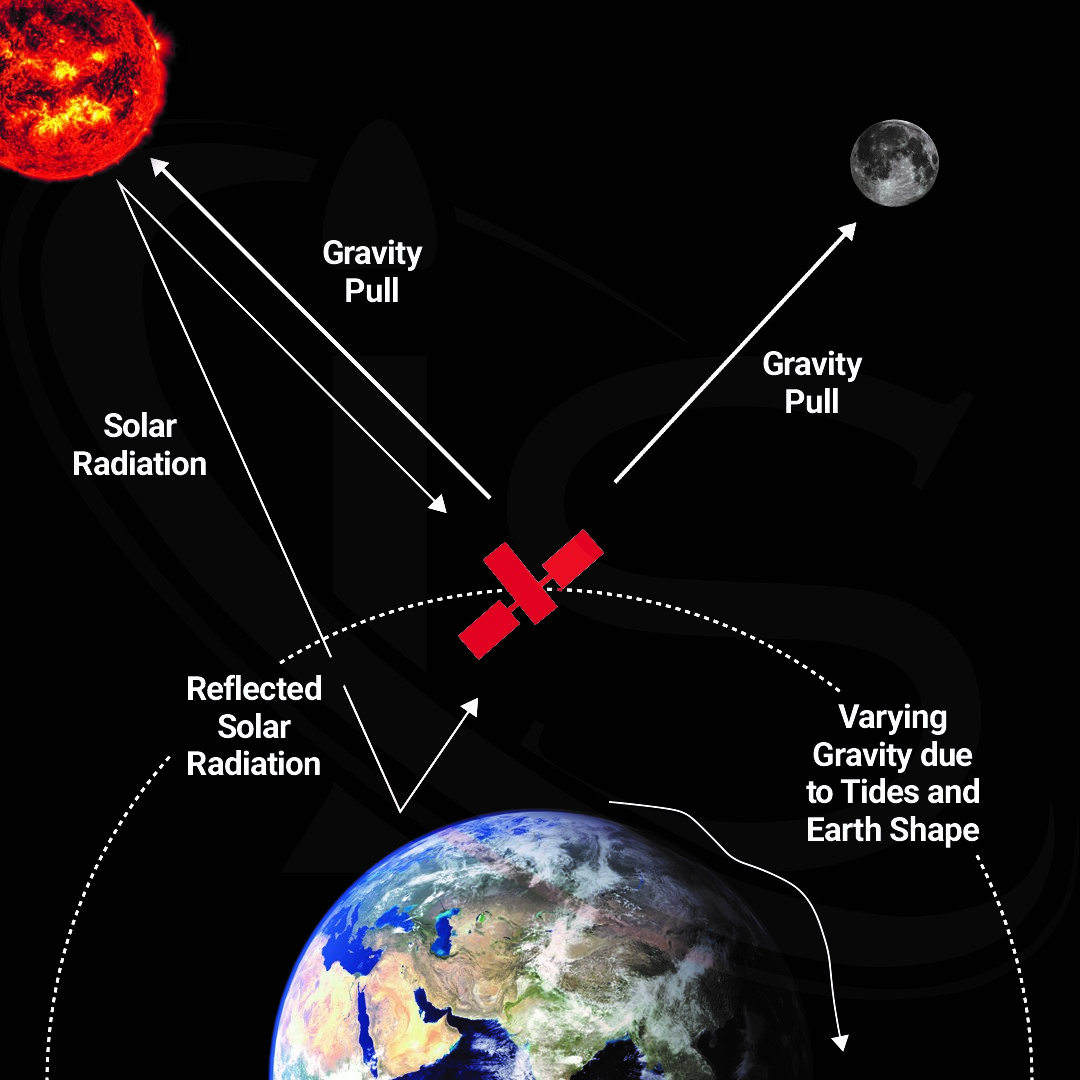

In the previous Science Capsule, we talked about how satellites communicate with Earth, and the challenges that come with such long-distance interactions. However, one of the lesser-known difficulties that satellites face when relaying information to and from Earth is due to the different rate at which time passes between the two. If left unaccounted for, this would become a major problem. Something which would be especially true for satellites that are conveying information related to specific locations on Earth, such as GPS signals. If this issue were to be left unchecked, the information relayed could be off by multiple meters.

The Theory of General Relativity

But why does this phenomenon occur? The answer is simply Einstein’s theory of General Relativity. Now, by simply I do not mean that the theory itself is simple (it is very much not), but rather that this time difference is strictly related to said theory. In fact, to fully explain everything behind General Relativity would take a very long time, and the collaboration of someone who knows much more about this field than I do. However, for the purposes of understanding how satellites are affected by this theory, focusing only on the bases behind it and the practical consequences of it will more than suffice.

To do so, let us start off with the reason why time runs differently on satellites compared to Earth. The answer is that satellites are at a different point on Earth’s gravitational field than us here on the surface. Because of this, the way in which the space-time continuum bends due to Earth’s gravitational waves differs ever so slightly between where we and the satellite are. The equation for how gravity affects the space time continuum is the following:

![]()

Where:

![]()

![]()

![]()

![]()

![]()

![]()

![]()

![]()

This equation can look pretty intimidating and, in fact, requires some knowledge of differential geometry to fully understand it. Fortunately, when talking about a (approximately) spherical object like Earth, we can write the equation for the general relativistic time dilation factor as follows:

![]()

Where:

![]()

![]()

![]()

![]()

![]()

The only unknown that we need to solve for on the right side of the equation is the gravitational potential of the satellite. This can be done with an integral of Earth’s gravitational field with respect to the radius r, and will yield the following solution:

![]()

Where:

![]()

![]()

![]()

![]()

![]()

![]()

Some Concrete Numbers

All that is left to do is plug in the distance of the satellite from Earth’s center, as well as the time elapsed on Earth’s surface. The answer will yield the difference between the time elapsed on the satellite and on Earth. It’s worth noting that, because all satellites are at a higher gravitational potential than Earth’s surface, the time elapsed on the satellite is always going to be more than that on Earth.

As an example, we can use an MEO satellite located at 15,000 km above the Earth with a time elapsed on Earth of 24 hours, or 86,400 seconds. Plugging everything in, we get a time dilation of approximately 0.000308 seconds. This means that over the course of a full day here on Earth, the satellite would have experienced 0.000308 seconds more than we would have. While that may not seem like much at first glance, when dealing with very precise measurements, as satellites often do, this change in time could become quite significant. This is why it is essential to take these general relativistic corrections into account when dealing with satellite-Earth communications.

It is also worth pointing out that satellites move at high enough speeds that some amount of special relativistic corrections would also need to be implemented to get the fully accurate time dilation. However, that is a topic for another time.